In mathematics, especially in probability theory and statistics, probability distributions represent the values of a variable containing the probabilities of a trial and allotment.

Probability distributions are one of the most important concepts in data science and machine learning. It is very important in data analysis, especially when it comes to knowing the characteristics of the data. Theoretically, you may have experienced these notions of distribution several times. However, I’m always curious about how to show these probability distributions in Python.

In this article, we’ll look at common probability distributions and try to understand their differences. In addition to this, you will also learn how to visualize common probability distributions in Python. The main points described in this article are listed below.

Table of contents

- What is a probability distribution?

- Types of data

- Elements of the probability distribution

- Probability mass function

- probability density function

- Discrete probability distribution

- Binomial distribution

- Poisson distribution

- Continuous probability distribution

- Normal distribution

- Uniform distribution

Let’s start by understanding what the probability distribution is.

What is a probability distribution?

In mathematics, especially in probability theory and statistics, probability distributions represent the values of a variable containing the probabilities of a trial. In gadget getting to know and facts science, there may be a massive use of chance distribution. In the context of gadget getting to know, we’re required to cope with numerous facts and the procedure of locating styles in facts acquires numerous research relying at the chance distribution.

It can be understood that most of the models related to machine learning are required to learn data uncertainty. Due to their consequences and increased uncertainty, probability theory is more relevant to the process. To learn more about probability distributions with machine learning, we need to classify the probability distributions that may follow after classifying the data. Let’s start by understanding the categories of data.

Types of data

When it comes to machine learning, we mostly use different data formats. The dataset can be viewed as a differentiated sample from the sample population. These differentiated samples from the population need to be aware of their own patterns so that they can make predictions for the entire dataset or the population.

For example, suppose you want to predict the price of a vehicle, taking into account the specific characteristics of your company’s vehicle. After statistically analyzing some samples of vehicle records, you can predict vehicle prices for different companies.

Looking at the scenario above, we can say that a record is made up of two types of data elements.

Numerical : This type of data can be further divided into two types.

Discrete: This kind of numeric data can only be a certain value like number of apples in a basket and number of people in a team etc.

Continuous: This numeric data type can be a real value or a fractional value, e.g. tree height, tree width, etc.

Categorical (names, labels, etc): These can be categories like gender, status, etc.

You can use the discrete random variables in the dataset to calculate the probability mass function and the continuous random variables to calculate the probability density function.

Here we have seen how to classify data types. Now it is easy to understand that the probability distribution can represent the distribution of probabilities for the various possible outcomes of an experiment. Let’s take a closer look using the classification of probability distributions.

Elements of the probability distribution

I have the following function used to get the probability distribution:

Probability mass function: This function returns the probability of similarity. This is the probability that a discrete random variable will be equal to a particular value. This can also be called a discrete probability distribution.

This function returns the probability of similarity. This is the probability that a discrete random variable will be equal to a particular value. This can also be called a discrete probability distribution.

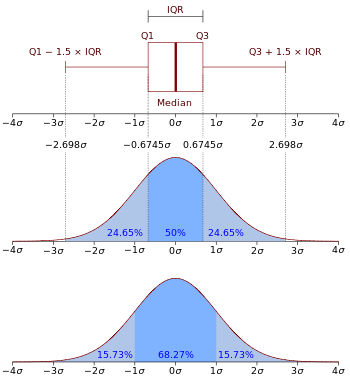

Probability density function: This function represents the density of continuous random variables within the specified range of values. It can also be called a continuous probability distribution.

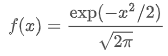

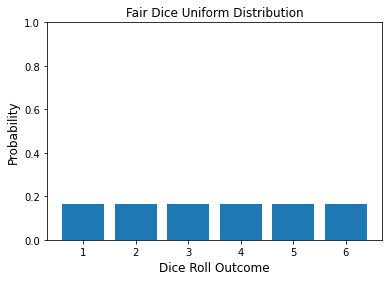

The image above shows a representation of the probability density function of the normal distribution. The above types are the two main types of probability distributions.

Talking about categories in essence, you can classify the probability distribution as shown in the following image.

In the section above, we looked at what the discrete and continuous probability distributions are. The next section describes the subcategories of these two main categories.

Discrete probability distribution

Popular distributions in the Discrete Probability Distribution category are listed below because they are available in Python.

Binomial distribution

This distribution is a function that can summarize the probability that a variable will take one of two values under the assumed set of parameters. This distribution is primarily used in test sequences and requires a solution in the form of yes / no, positive and negative.

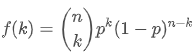

This type of experiment is called the Bernoulli test or Bernoulli experiment. The probability mass function of the binomial equation is:

Where k is {0,1,….,n,}, 0<=p<=1

You can use the following line of code in Python to generate a binomial discrete random variable.

import numpy as np

import matplotlib.pyplot as plt

import scipy.stats as stats

import matplotlib.pyplot as plt

for prob in range(3, 10, 3):

x = np.arange(0, 25)

binom = stats.binom.pmf(x, 20, 0.1*prob)

plt.plot(x, binom, '-o', label="p =

{:f}".format(0.1*prob))

plt.xlabel('Random Variable', fontsize=12)

plt.ylabel('Probability', fontsize=12)

plt.title("Binomial Distribution varying p")

plt.legend()

Output:

The graph visualizes the binomial distribution.

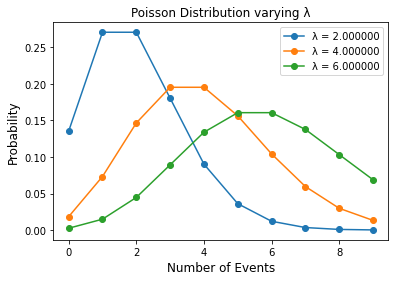

Poisson distribution

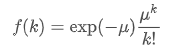

It is a subcategory of the discrete probability distribution that represents the probability of some possible event occurring in a fixed time range. More formally, it represents the number of times an event can occur in a particular time period. This distribution is named after the mathematician Simeon Donis Poisson. If the variable of interest is discrete in the data, we will primarily use this distribution. The probability mass function of the Poisson distribution is:

For k>= 0

The next line represents the Poisson distribution

for lambd in range(2, 8, 2):

n = np.arange(0, 10)

poisson = stats.poisson.pmf(n, lambd)

plt.plot(n, poisson, '-o', label="λ =

{:f}".format(lambd))

plt.xlabel('Number of Events', fontsize=12)

plt.ylabel('Probability', fontsize=12)

plt.title("Poisson Distribution varying λ")

plt.legend()

Output:

Continuous probability distribution

Here are some popular distributions in the continuous probability distribution category that are available in Python.

Normal distribution

It is a subcategory of the continuous probability distribution that can also be called a Gaussian distribution. This distribution represents a probability distribution for a real-valued random variable. The probability density function for a normal distribution is:

for a real number x.

Using the lines of code below, we represent the normal distribution of a real value.

from seaborn.palettes import color_palette

n = np.arange(-70, 70)

norm = stats.norm.pdf(n, 0, 10)

plt.plot(n, norm)

plt.xlabel('Distribution', fontsize=12)

plt.ylabel('Probability', fontsize=12)

plt.title("Normal Distribution of x")

Output:

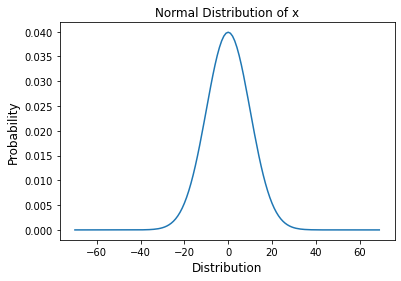

Uniform distribution

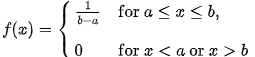

This distribution is a subcategory of the continuous distribution, which represents the same probability that all events will occur. The probability density function for uniform distribution is:

This can be understood using the example of rolling fair dice, where the probability of appearance on each side of the dice is equal. You can use the following line of code to represent the distribution of the probabilities of rolling a fair dice.

probs = np.full((6), 1/6)

face = [1,2,3,4,5,6]

plt.bar(face, probs)

plt.ylabel('Probability', fontsize=12)

plt.xlabel('Dice Roll Outcome', fontsize=12)

plt.title('Fair Dice Uniform Distribution', fontsize=12)

axes = plt.gca()

axes.set_ylim([0,1])

Output:

Final words

In this article, we have seen the probability distribution and tried to understand how to classify it. Various probability distributions are available depending on their nature. Some of the important distributions were discussed in Visualizations in Python.