PyTorch is an extremely dominant framework for building deep learning. Because of its straightforward model-building method, this framework is not as difficult to learn as other deep learning frameworks. In this article, we will go over how to create an end-to-end deep learning model that will be useful for a beginner-level machine learning practitioner. This tutorial will show you how to define and use a convolutional neural network (CNN) in a very simple way by explaining each step in detail.

The following are the main points that will be covered in this article :

CNN (Convolutional neural network)

CNNs are deep neural network models that were originally designed for analyzing 2D image input but are now capable of analyzing 1D and 3D data as well. A convolutional neural network’s core can be composed of two or more convolutional layers, each of which performs “convolution,” which entails multiplying the neural network’s inputs by a series of n x n diagonal matrices.

Unlike in a traditional neural network, this multiplication is accomplished by using a “window” that traverses the image, known as a filter or kernel. The weights are multiplied by a series of input values each time the filter passes over the image. The convolution operation’s output values for each filter position (one for each filter position) form a two-dimensional matrix of output values that represent the features extracted from the underlying image. This output matrix is referred to as a “feature map.”

When the feature map is finished, any value in the functional map can be transmitted non-linearly to the next convolutional layer (for example, via ReLU activation). Fully connected layers take the output of the convolutional layer sequence and generate the final prediction, which is usually a label that describes the image.

To summarize, the complete CNN model consists of two layers, the first of which is the convolutional layer and the second of which is the Pooling layer. The convolutional layer generates a feature map using the above-mentioned mathematical operations, and the Pooling layer is used to further reduce the size of the feature map. The most common pooling layers are maxed and average pooling, which takes the maximum and average value from the filter’s size (i.e., 22, 33, etc.).

Next, we’ll look at how we can use PyTorch to build such a CNN model.

CNN Implementation by utilizing Pytorch

PyTorch is a well-known and widely used deep learning library, especially in academic research. It is an open-source machine learning framework that reduces the time required to move from research prototyping to production deployment. This implementation will be broken down into major steps such as data loading, model construction, model training, and model testing.

In this section, let’s walk through the process step by step.

Now let’s quickly import all of the required dependencies.

import torch import torch.nn as nn import torchvision import torch.nn.functional as F import torchvision.transforms as transforms import matplotlib.pyplot as plt

Dataset preparation

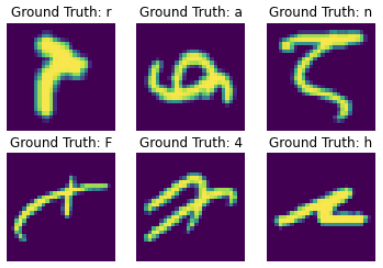

As previously discussed, let us begin by loading the dataset. The dataset used is EMNIST, which is an expanded version of the MNIST dataset that includes handwritten digits as well as small and capital handwritten letters.

This dataset’s training subset contains nearly 1,20,000 grayscale images with dimensions of 28 x 28 pixels. By specifying in the API, we can load this dataset as train and test.

First, pre-processing functionality for images was defined, as well as those to be used in training and testing datasets when downloading.

Later, the data loader was used, which comes in handy especially when there are thousands of images and loading all of them at once will put a tremendous strain on our system. The data loader efficiently iterates and calls this dataset.

# apply transformation

transform = transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.5,), (0.5,))

])

# download the data

training_data = torchvision.datasets.EMNIST(root='contents/',download=True, transform=transform, train=True,split='balanced')

test_data = torchvision.datasets.EMNIST(root='contents/',download=True, transform=transform, train=False,split='balanced')

# build the data loader

train_loader = torch.utils.data.DataLoader(dataset = training_data,

batch_size = 128,

shuffle = True)

test_loader = torch.utils.data.DataLoader(dataset = test_data,

batch_size = 128,

shuffle = True)

Here are some examples from our dataset.

Constructing the model

The next step is to define the model. In this section, let us define our model in the same way a sophisticated Python class would be defined. To begin, let’s make a new class that makes use of PyTorch’s nn.Module class. This is critical when building a neural network because it gives us a plethora of useful methods.

Our neural network’s layers must then be defined. This is done in the __init__ method of the class. We simply need to name our layers and assign them to the appropriate layer, such as for this case, a convolutional layer, a pooling layer, a fully connected layer, and so on.

Finally, a forward method must be defined in our class. The goal of this method is to specify the order in which the various layers process the input data. We can code it as a combination of all of the above.

class CNNModel(nn.Module):

def __init__(self):

super(CNNModel, self).__init__()

self.conv1 = nn.Conv2d(in_channels=1, out_channels=32, kernel_size= 5,stride = 1)

self.conv2 = nn.Conv2d(32, 64, 5, 1)

self.fc = nn.Linear(64*20*20, 47)

def forward(self, x):

x = F.relu(self.conv1(x))

x = F.relu(self.conv2(x))

x = F.max_pool2d(x, 1)

x = torch.flatten(x, 1)

x = self.fc(x)

output = F.log_softmax(x, dim=1)

return output

# initiating the model

model = CNNModel()

Guidelines for building the model

Having a look at the layer distribution and unit sizes under the layer, one might think that it can be passed arbitrarily well, but that is not the case. Even though an attempt was made to approach in that arbitrary manner, an error was received for dimension mismatch.

This error is caused primarily by the connection between our convolutional layer and the Linear layer. In PyTorch, each layer takes the series of mandatory arguments: input channels, output channels, kernels, and optionally a stride, as shown for the convolutional layer.

We cannot set input channels arbitrarily but rather based on the type of image, whether grayscale or B&W, which should be 1, and for RGB, which should be 3. We can set the output size to any number, but it should be equal to the input of the next layer.

Kernels are simply the filters that are used to create a feature map for a given image, and stride tells the movement of the kernel over an image, where 1 indicates that it is taking one step at a time.

So, from the preceding Linear layer, expect two essential dimensions: input and output shape. When we connect the convolutional layer to the Linear layer, it accepts the image’s size. And, as a result of the final convolutional layer, the dimension of our images has been changed is no longer 28 x 28 pixels.

The following calculation can be used to determine the proper value of the linear layer’s input channels:

- A 28 x 28 image was supplied for the first layer after applying 5 x 5 convolution pixels sizes are reduced by 4 on each side making 24 x 24 at output 1st layer.

- Furthermore, at the next layer, we have the same 5 x 5 filter, which makes it a total of 20 x 20, which is the batch size of the single image at output 2nd layer.

As previously discussed, the linear layer’s input channels should be 64*20*20. If you want to add more layers, use the kernel operation to get the batch size for fully connected layers.

Now, let’s look at the model definition.

In the forward method, the activation function was applied to each layer, then a pooling operation was used with max-pooling, and finally, the layers were flattened. Finally, in the forward pass, the softmax function was used, which turned out to be a classifier.

Model Compilation

Now, in the following section, let us compile our model, where we’ll primarily define the loss function and optimizer function. Cross-entropy is used as a loss function in this case, and a Stochastic gradient descent optimizer is used to reduce loss during the training process.

# loss function criterion = nn.CrossEntropyLoss() # Optimizer optimizer = torch.optim.SGD(model.parameters(), lr=0.001)

Procedure for training, testing, and evaluation

Following that, all of the procedures and calculations were created. It is now time to train the aforementioned model. It’s a little difficult to train in PyTorch here. To make continuous training, we must manually access each element and arrange it in a loop. The steps are as follows:

Start by iterating through the number of epochs in our training data, then through the batches. Then convert the images and labels based on whether a GPU or a CPU is used. Our model is used to make predictions in the forward pass, and then compute loss based on those predictions and our actual labels.

Then, to improve our model, a backward pass is performed in which our weights are updated. The gradients are then set to zero before each update using the optimizer.zero grad() function. The loss.backward() function is then used to compute the new gradients.

Finally, the weights are updated with the optimizer.step() function.

# fetch model to the working device

model.to(device)

# training loss

train_loss = []

test_losses =[]

def train(e):

#Load in the data

for i, (images, labels) in enumerate(train_loader):

# load data on to device

images = images.to(device)

labels = labels.to(device)

# Forward pass

outputs = model(images)

loss = criterion(outputs, labels)

# Backward and optimize

optimizer.zero_grad()

loss.backward()

optimizer.step()

train_loss.append(loss.item())

print('Epoch [{}/{}], Train Loss: {:.4f}'.format(e+1, 10, loss.item()))

Similarly, the testing procedure is similar to the training procedure, except for calculating the gradients because no weights are being updated. We enclose the code with torch. no grad() because gradients are not required for calculation. We then apply our model to each batch and calculate how many of them are correct.

def test():

test_loss = 0

with torch.no_grad():

correct = 0

total = 0

for images, labels in test_loader:

images = images.to(device)

labels = labels.to(device)

outputs = model(images)

test_loss += F.nll_loss(outputs, labels, size_average=False).item()

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

test_loss /= len(test_loader.dataset)

test_losses.append(test_loss)

print('Test Accuracy: {:4f} %, Test loss: {:4f}'.format((100 * correct / total),test_loss))

The training and testing can be started simultaneously by using the two functions defined above.

for i in range(10): train(i) test()

This yields a testing accuracy of around 79 percent. Refer to the notebook for more information.

Conclusion

The convolutional neural network is discussed in this article and it is implemented practically using PyTorch. When compared to other frameworks, PyTorch is designed to be more developer-centric. To use PyTorch, one must first understand the application. In this case, we created CNN to be able to trace the convolutional operation through the layers. Finally, for a beginner, PyTorch is recommended. It will aid in the sharpening of your concepts as you construct the model.